Building AI as a Front-End Interface on Top of Documentation

For decades, documentation has been a read-only medium. Whether in HTML, PDF, or Markdown, users were expected to find, read, and interpret information manually. Even as we layered search indexes and tagging systems on top of documentation portals, the process still depended on the user’s ability to form the right query and navigate through long pages. The rise of large language models (LLMs) fundamentally changed that dynamic. Suddenly, it became possible to ask questions in natural language and get meaningful answers distilled from large bodies of text.

This led to a new paradigm: using AI as a front-end interface for documentation. Instead of clicking through navigation trees or keyword searches, users interact conversationally. They can ask questions like “How do I authenticate my app?”, “What permissions does the API need?”, “Give me a Python example for file upload.” In theory, the model should understand the question, retrieve the relevant documentation, and answer directly. But in practice, achieving this level of accuracy and trust is far from trivial.

The Central Problem: Accuracy and Trust

When a model produces text without being grounded in your content, it risks providing confident answers that are completely wrong, commonly known as hallucinations. In the context of developer documentation, hallucinations are fatal. An AI that suggests an incorrect parameter, an outdated endpoint, or an unsupported configuration can cause integration failures or even system downtime. That’s why building an AI documentation interface requires an architecture that strictly controls what the model can access and how it formulates answers.

This is where Retrieval-Augmented Generation (RAG) becomes essential.

RAG: Grounding the Model in Real Documentation

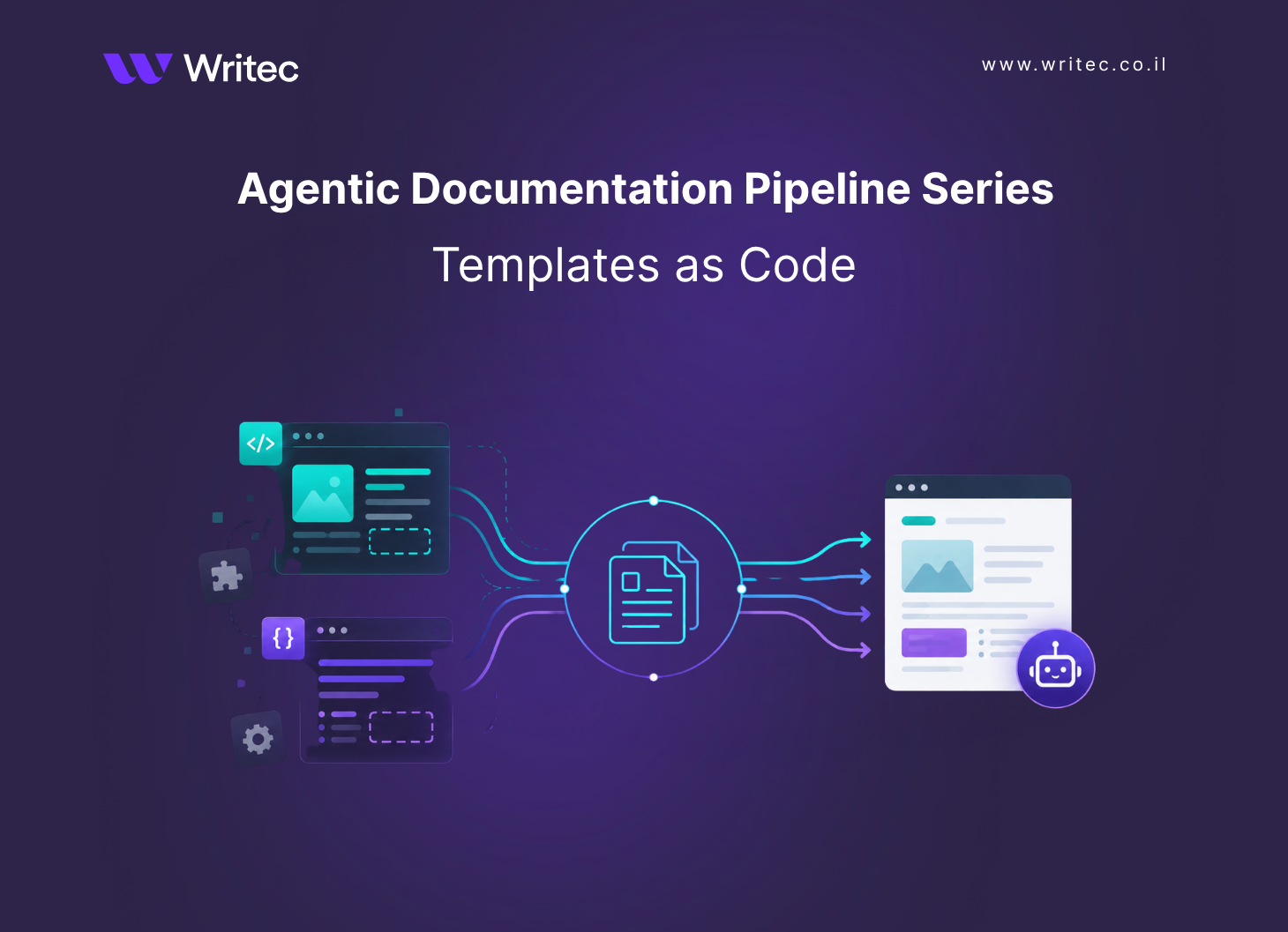

At its core, RAG is a hybrid architecture that combines the following:

- Retrieval - fetching factual data

- Generation - producing natural language

Instead of letting the model rely on its internal training data, RAG forces it to base its responses on retrieved content from a trusted corpus, which in this case is your documentation.

Here’s what happens step by step:

- Ingestion and Chunking - the documentation is parsed into structured text units. These can come from Markdown files, Confluence pages, API specs, or PDF manuals. Because LLMs have context size limits (the number of tokens they can “see” at once), large documents are split into small, semantically coherent chunks. Typically these are paragraphs or sections of 300–800 words. Each chunk maintains metadata (such as filename, section heading, version, and language) to preserve traceability.

- Embedding and Indexing - each chunk is then converted into a vector representation using an embedding model such as text-embedding-3-large (OpenAI) or bge-base (HuggingFace). A vector captures the semantic meaning of text, similar sentences map close to each other in multi-dimensional space. These vectors are stored in a vector database like Pinecone, Weaviate, or Qdrant, forming the searchable “memory” of your documentation.

- Retrieval at Query Time - when a user asks a question (e.g., “How do I generate an API token?”) the query is embedded into a vector as well. The system searches for the most semantically similar chunks in the vector database. Usually, 3–10 of the top results are retrieved, along with their metadata. This ensures the model has access only to relevant and up-to-date documentation excerpts.

- Contextual Generation - these retrieved chunks are concatenated into a context window and sent to the LLM alongside the user’s question. The prompt typically includes explicit grounding instructions such as: “Answer using only the context provided. Do not make up information not found in the context.” The model then synthesizes a coherent, human-readable answer using the documentation as its factual base.

- Source Attribution and Confidence - finally, the system returns the response with source citations or snippets. Many implementations highlight which document or section the answer was derived from, allowing users to verify accuracy.

By grounding the model in this way, hallucinations drop dramatically. The LLM still performs the linguistic work, but the factual foundation comes from your vetted documentation.

Conclusion

Using AI as a front-end interface for documentation is not about replacing writers or abandoning structure, it’s about making documentation accessible in the way humans naturally communicate. The key challenge is accuracy, and the key to accuracy is grounding. Retrieval-Augmented Generation transforms LLMs from unreliable text generators into reliable doc assistants that reflect the source of truth. This is the next step in the evolution of technical communication: from reading documentation to talking to it.